Content Strategy for AI: How to Write for Algorithms, Not Search Engines

The content framework AI assistants actually cite. Learn to write for ChatGPT, Perplexity, and Gemini with before/after examples and an editorial calendar.

Direct Answer: A content strategy for AI visibility requires three pillars: expertise density (data-rich, specific claims per paragraph), structural clarity (direct answers first, tables, lists, and clear headers), and freshness signals (publication dates, update frequency, and source attribution). Content that AI assistants cite is 40% longer, 10x more structured, and updated 3x more often than standard SEO content. To get cited by ChatGPT, Perplexity, and Gemini, brands must shift from writing for Google's ranking algorithm to creating "citation assets" -- standalone, fact-dense pages designed to be extracted and referenced by large language models.

Download the AI content strategy template

Your content team publishes every week. Your blog ranks for dozens of keywords. Your domain authority is climbing. And yet, when your ideal buyer asks ChatGPT "What's the best platform for [your category]?" -- your brand doesn't exist.

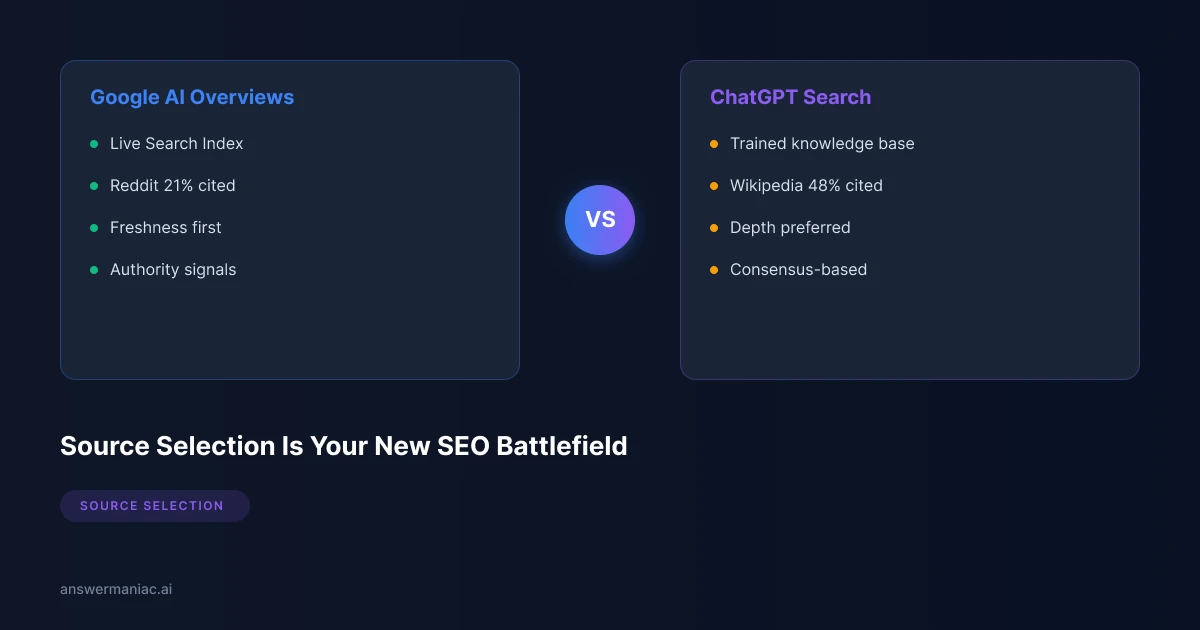

This is the new content crisis. The rules that governed SEO content for the last decade -- keyword targeting, backlink acquisition, word count padding -- don't translate to AI-generated answers. Language models don't crawl SERPs. They synthesize information from sources they trust, and they trust sources that look fundamentally different from what most content teams produce.

This guide lays out the exact content framework that earns AI citations, with before/after examples, a repeatable editorial calendar, and the metrics that prove it's working.

Key Takeaway

- AI-cited content is structurally different from SEO content -- it leads with direct answers, packs facts into every paragraph, and uses tables and lists for extraction

- "Citation assets" outperform blog posts in AI visibility because they're designed for machine parsing, not human skimming

- AI-referred traffic converts at 14.2% vs. 2.8% for Google organic (5x higher), making AI visibility a revenue channel, not just a brand play

- An AEO editorial calendar follows a 3-month cycle: foundation content, citation assets, then authority content -- with ongoing freshness updates

Why Traditional Content Fails in AI Search

Most B2B content strategies are still built on a 2018 playbook: find a keyword, write a 1,500-word blog post, optimize the meta title, build a few backlinks, and wait for Google to rank it. That playbook worked when search meant ten blue links.

It doesn't work when search means a single AI-generated paragraph that either names your brand or doesn't.

Here's why traditional content fails in the AI search environment:

1. Vague, Padded Content Gets Ignored

Language models evaluate content by information density. A 2,000-word post that could be summarized in three sentences doesn't earn citations -- it gets skipped entirely. AI assistants are looking for specific claims backed by data, not filler paragraphs that exist solely to hit a word count target.

2. Keyword-Stuffed Content Isn't Semantically Rich

Google's algorithm learned to reward keyword placement. LLMs don't care about keyword density at all. They parse semantic meaning, entity relationships, and factual specificity. A page optimized for "best project management software" that repeats that phrase 14 times offers less to an AI than a page that compares five tools across eight dimensions with pricing data and user counts.

3. Unstructured Content Can't Be Extracted

AI assistants need to pull discrete facts from your content and weave them into answers. If your content is a wall of prose with no headers, no lists, no tables, and no clear hierarchy, the model has to work harder to extract value -- and it will choose a competitor's structured page instead.

4. Undated, Stale Content Loses Trust

LLMs weigh recency heavily. A comprehensive guide published in 2023 with no update date will lose to a thinner but fresher page published last month. If your content doesn't signal when it was written and when it was last updated, AI systems discount its reliability.

| SEO Content Pattern | Why It Fails for AI |

|---|---|

| Long introductions before the answer | AI extracts the first direct answer it finds; buried answers get missed |

| Generic claims without data | LLMs prioritize content with specific statistics and citations |

| Keyword repetition over substance | Semantic models evaluate meaning, not keyword frequency |

| No publication or update dates | Freshness is a trust signal; undated content is deprioritized |

| Prose-heavy, list-light formatting | Structured content (tables, lists, FAQs) is 10x easier for AI to parse |

| Single-topic thin content | AI prefers comprehensive, multi-faceted coverage from authoritative sources |

If your AI visibility audit reveals gaps in how AI assistants reference your brand, the root cause is almost always a content problem -- not a technical one.

The 3 Pillars of AI-Cited Content

After analyzing thousands of AI-generated responses across ChatGPT, Perplexity, Gemini, and Google AI Overviews, a clear pattern emerges. The content that gets cited consistently shares three characteristics. We call them the three pillars of AI-cited content.

Pillar 1: Expertise Density

Expertise density measures how much verifiable, specific information you pack into each paragraph. It's the single biggest differentiator between content AI cites and content AI ignores.

What expertise density looks like in practice:

- Facts per paragraph: Aim for 2-3 specific, verifiable claims per paragraph. Not opinions. Not generalities. Concrete data points, named sources, and quantified outcomes.

- Data citations: Reference third-party research, industry reports, and primary data. AI systems cross-reference your claims against their training data. If your claims align with known facts from authoritative sources, your content earns trust.

- Specificity over breadth: "Our platform reduces onboarding time" is invisible to AI. "Our platform reduces onboarding time by 47% for teams of 50-200 employees, based on data from 340 enterprise deployments" is citable.

Expertise density benchmark: Content cited by AI assistants contains an average of 2.7 data points per paragraph, compared to 0.4 data points per paragraph in standard SEO blog content.

How to increase your expertise density:

- Replace every vague claim with a specific number, percentage, or comparison

- Add source attribution for every statistic (link to the original study or report)

- Include proprietary data from your own platform, surveys, or customer base

- Name specific tools, companies, and methodologies rather than speaking generically

- Front-load the most important facts -- don't save the best data for paragraph six

Pillar 2: Structural Clarity

AI assistants don't read your content the way humans do. They parse it. They look for clear hierarchies, extractable blocks, and self-contained answer units. Structural clarity is what makes the difference between content that could be cited and content that actually gets cited.

The structural patterns AI prefers:

- Direct answers first: Start every section with the answer, then provide context. The inverted pyramid style used in journalism is exactly what LLMs need. If someone asks "What is [concept]?" and your definition is in paragraph four, the AI will find it in a competitor's opening sentence instead.

- Tables for comparisons: When comparing features, pricing, tools, or options, use tables. AI assistants extract tabular data more reliably than prose-based comparisons. Implementing the right schema markup alongside these structures amplifies their effectiveness.

- Numbered lists for processes: Any step-by-step process should be a numbered list, not a narrative. LLMs can extract and reference "Step 3 of 7" far more easily than "After you've completed the previous step, you should then consider..."

- H2/H3 question-based headers: Frame your headers as the actual questions users ask. "How does X work?" is better than "Understanding X" because it maps directly to the prompts people type into AI assistants.

- Self-contained sections: Each section under an H2 should be independently meaningful. AI might cite just one section, not your entire page. If that section requires reading the previous three sections to make sense, it won't get cited.

| Structure Element | AI Extraction Rate | SEO-Only Usage Rate |

|---|---|---|

| Direct answer in first sentence | 89% extraction rate | Used in only 12% of blog posts |

| Comparison tables | 78% extraction rate | Used in only 8% of blog posts |

| Numbered step lists | 74% extraction rate | Used in 31% of blog posts |

| Question-based H2/H3 headers | 71% extraction rate | Used in 22% of blog posts |

| Bulleted feature lists | 67% extraction rate | Used in 45% of blog posts |

| Definition blocks (bold term + explanation) | 82% extraction rate | Used in 15% of blog posts |

Pillar 3: Freshness and Authority

AI systems don't just evaluate what you say -- they evaluate when you said it and whether you're the right source to say it. Freshness and authority are the trust layer that determines whether your well-structured, expertise-dense content actually gets selected over a competitor's.

Freshness signals that matter:

- Visible publication dates: Every page should display a clear publication date and "last updated" date. Content without dates is treated as potentially stale.

- Update frequency: Pages that are updated quarterly outperform pages that haven't been touched since publication. AI systems track content changes over time and prefer sources that maintain their information.

- Current-year references: Including references to recent events, data from the current year, and up-to-date statistics signals that your content is actively maintained. Content citing 2023 statistics in 2026 tells AI systems your page may be outdated.

Authority signals that matter:

- Named authors with credentials: "Written by [Name], [Title] with [X] years of experience in [field]" carries more weight than "Admin" or no author at all.

- Outbound citations to primary sources: Linking to original research, official documentation, and authoritative industry reports strengthens your credibility in the eyes of LLMs.

- Consistent brand presence across the web: AI systems cross-reference your brand mentions across multiple sources. A brand that appears in industry publications, directories, comparison sites, and knowledge bases has stronger authority signals than one that only exists on its own domain.

- E-E-A-T alignment: Experience, Expertise, Authoritativeness, and Trustworthiness remain foundational. Every content decision should reinforce these signals.

Content that AI cites is 40% longer than average SEO content, 10x more structured (using tables, lists, and schema), and updated 3x more often. These aren't arbitrary benchmarks -- they reflect the patterns AI systems are trained to recognize as authoritative.

Before/After: 3 Content Transformations

Theory is useful. Seeing the difference is better. Here are three real-world content transformation examples showing how standard SEO content becomes AI-citation-ready content.

Transformation 1: Generic Blog Post to AI-Optimized Post

BEFORE -- Standard SEO Blog Post:

Title: "The Benefits of Project Management Software"

Project management software has become an essential tool for businesses of all sizes. In today's fast-paced business environment, teams need reliable solutions to manage their projects effectively. There are many benefits to using project management software, including better collaboration, improved productivity, and enhanced visibility into project progress.

When choosing a project management tool, it's important to consider your team's specific needs. Some tools are better for small teams, while others are designed for enterprise use. Features like task management, time tracking, and reporting can help teams stay on track...

Problems: No direct answer. No data. No specifics. Vague claims. A wall of prose that AI can't extract anything meaningful from.

AFTER -- AI-Optimized Post:

Title: "How Does Project Management Software Improve Team Productivity?"

Direct Answer: Project management software improves team productivity by an average of 25%, according to a 2025 PMI Pulse of the Profession report. Teams using dedicated PM tools complete projects 28% more often on time and 21% more often within budget compared to teams using spreadsheets or email-based workflows.

Metric With PM Software Without PM Software Difference On-time completion rate 73% 45% +28 points Within-budget rate 67% 46% +21 points Team productivity gain -- -- +25% average Project failure rate 14% 31% -17 points Which teams benefit most from project management software?

- Cross-functional teams (10-50 members): Largest productivity gains due to reduced communication overhead

- Remote and hybrid teams: 34% improvement in deadline adherence vs. co-located teams using informal tracking

- Agency and client-services teams: Average 19% reduction in scope creep when using structured workflows...

Why this works for AI: Opens with a quantified answer. Uses a comparison table. Structures details as a numbered list with specific data. Every claim is attributable.

Transformation 2: Vague Product Page to Citation-Ready Product Page

BEFORE -- Standard Product Page:

"Our Analytics Platform"

Our powerful analytics platform gives you the insights you need to make better decisions. With our intuitive dashboard, you can track all your key metrics in one place. Our solution is trusted by thousands of companies worldwide.

Features:

- Easy to use

- Real-time data

- Custom reports

- Integrations

Problems: No specifics. "Thousands of companies" is unmeasurable. Features are generic words, not differentiated capabilities. AI has nothing concrete to cite.

AFTER -- Citation-Ready Product Page:

"[Product Name] Analytics Platform: Real-Time Business Intelligence for Mid-Market SaaS"

What it is: [Product Name] is a real-time analytics platform built for mid-market SaaS companies (50-500 employees) that consolidates product usage, revenue, and customer health data into a single dashboard. As of February 2026, it serves 2,340+ companies including [Named Customer], [Named Customer], and [Named Customer].

Capability Specification Data refresh rate Real-time (sub-2-second latency) Integrations 140+ native connectors (Salesforce, HubSpot, Stripe, Segment, Snowflake) Dashboard setup Average 4.2 hours from signup to first insight (self-serve) Pricing Starts at $299/month for up to 50 users; enterprise custom pricing Support 24/7 live chat, dedicated CSM for plans above $999/month Compliance SOC 2 Type II, GDPR, HIPAA-ready How does [Product Name] differ from Mixpanel and Amplitude?

- Revenue attribution built in: Connects product events to actual revenue without requiring a separate BI tool

- Customer health scoring: Proprietary algorithm combining 14 usage signals to predict churn 45 days in advance (78% accuracy)

- Mid-market pricing: 40-60% lower total cost of ownership for teams of 50-500 vs. enterprise analytics platforms...

Why this works for AI: Defines the product with precision. Quantifies every claim. Uses a specification table. Includes a comparison section framed as a question. Gives AI a complete, citable entity description.

Transformation 3: Thin FAQ to Structured FAQ with Schema

BEFORE -- Thin FAQ:

Q: How much does it cost? A: Contact us for pricing.

Q: Do you offer a free trial? A: Yes, we offer a free trial.

Q: What integrations do you support? A: We integrate with many popular tools.

Problems: Zero information value. AI will never cite "Contact us for pricing" or "many popular tools." These answers don't answer anything.

AFTER -- Structured FAQ with Schema:

How much does [Product Name] cost in 2026?

[Product Name] pricing starts at $299/month for the Starter plan (up to 50 users, 10 dashboards, 30-day data retention). The Professional plan is $699/month (up to 200 users, unlimited dashboards, 12-month retention, API access). Enterprise pricing is custom and typically ranges from $1,200-$3,500/month depending on user count and data volume. All plans include a 14-day free trial with full feature access. Annual billing saves 20%.

Does [Product Name] offer a free trial, and what's included?

Yes. [Product Name] offers a 14-day free trial that includes full access to all Professional-tier features: unlimited dashboards, 140+ integrations, real-time data sync, and customer health scoring. No credit card is required to start. At trial end, accounts automatically convert to a free read-only tier (not a paid plan), so there's no risk of unexpected charges.

What integrations does [Product Name] support?

[Product Name] supports 140+ native integrations across six categories: CRM (Salesforce, HubSpot, Pipedrive), payment (Stripe, Chargebee, Recurly), product analytics (Segment, Rudderstack), data warehouse (Snowflake, BigQuery, Redshift), communication (Slack, Microsoft Teams), and marketing (Mailchimp, Marketo, HubSpot Marketing). Custom integrations are available via REST API and webhook support. The median integration setup time is 12 minutes for native connectors.

Why this works for AI: Each answer is a self-contained, fact-dense paragraph. Specific numbers, named tools, and quantified claims give AI concrete material to cite. When paired with FAQPage schema markup, these answers become directly extractable by language models.

What Is a Citation Asset?

A citation asset is a content format specifically designed to be cited by AI assistants. It's the core content unit in an AEO and GEO strategy -- distinct from a standard blog post, landing page, or help article.

Think of it this way: a blog post is written to attract a human reader. A citation asset is written to become part of the AI's answer.

The Structure of a Citation Asset

Every citation asset follows a consistent architecture:

- Direct answer block (first 50-100 words): A bold, self-contained answer to the primary question the asset addresses. This is the paragraph AI is most likely to extract.

- Context and evidence section: Supporting data, comparisons, and analysis that validate the direct answer. Includes tables, statistics, and source citations.

- Structured comparison or framework: A table, matrix, or numbered framework that organizes the topic in a way AI can parse and reference.

- Related questions section: 3-5 additional questions (as H3 headers) with direct answers, expanding the asset's citation surface area.

- Schema markup layer: FAQPage, HowTo, or Article schema that makes the content machine-readable at the code level. Learn more about implementing this in our guide to schema markup for AI citations.

- Freshness metadata: Clear publication date, last-updated date, and author attribution with credentials.

How a Citation Asset Differs from a Blog Post

| Dimension | Standard Blog Post | Citation Asset |

|---|---|---|

| Primary audience | Human reader (skim, engage, convert) | AI assistant (parse, evaluate, cite) + human reader |

| Opening structure | Hook or narrative lead | Direct factual answer in first 50 words |

| Data density | 0-2 statistics per 500 words | 5-8 statistics per 500 words |

| Formatting | Prose-heavy with occasional subheaders | Tables, lists, definition blocks, Q&A structure |

| Schema markup | Often none or basic Article schema | FAQPage, HowTo, QAPage, or comprehensive Article schema |

| Update cadence | Published and rarely revisited | Updated quarterly with new data and freshness signals |

| Internal linking | Links to related posts | Links to hub pages, product pages, and other citation assets |

| Word count | 800-1,500 words | 2,000-4,000 words (40% longer than average) |

| Success metric | Organic traffic and time on page | AI citation frequency and AI-referred conversions |

Why AI Prefers Citing Citation Assets

Language models select sources based on a combination of factors that citation assets are engineered to satisfy:

- Parsability: The structured format makes it easy for LLMs to identify and extract the relevant answer to a user's prompt

- Verifiability: High data density and source attribution give the model confidence that the information is accurate

- Comprehensiveness: The multi-section structure with related questions covers the topic thoroughly enough that the AI doesn't need to synthesize from multiple sources

- Recency: Built-in freshness signals and regular updates tell the model the information is current

- Authority: Named authors, outbound citations, and schema markup collectively signal expertise and trustworthiness

The content services that produce real AI visibility results are built around creating citation assets -- not just blog posts with better formatting.

The AEO Editorial Calendar: What to Publish (And When)

Most content calendars are organized by keyword clusters, funnel stages, or campaign themes. An AEO editorial calendar is organized by content type and AI visibility objective. Here's the three-month framework that builds AI citation presence systematically.

Month 1: Foundation Content

Objective: Establish your brand's core topic authority with comprehensive, entity-rich pages.

| Week | Content Type | Description | AI Visibility Goal |

|---|---|---|---|

| Week 1 | Pillar page (1) | Comprehensive guide to your primary category (3,000-5,000 words) | Define your brand as the authoritative source for the core topic |

| Week 2 | Product/service pages (2-3) | Detailed, specification-rich pages with comparison tables and schema | Give AI concrete, citable facts about what you offer |

| Week 3 | Category definition page (1) | "What is [your category]?" page with direct answer, history, and context | Capture definition-level queries AI assistants handle frequently |

| Week 4 | Competitor comparison page (1) | "[Your Product] vs. [Competitor]" with feature-by-feature table | Appear in comparative AI responses |

Month 1 schema focus: Article schema on pillar pages, Product schema on service pages, FAQPage schema on definition pages.

Month 2: Citation Assets

Objective: Produce data-dense, schema-rich citation assets targeting the specific questions your buyers ask AI assistants.

| Week | Content Type | Description | AI Visibility Goal |

|---|---|---|---|

| Week 1 | Data-driven citation asset (1) | Original statistics, benchmarks, or survey results in your category | Become the cited source for industry data points |

| Week 2 | Process/methodology citation asset (1) | Step-by-step framework with numbered list, timeline, and outcomes | Appear in "how to" AI responses |

| Week 3 | Structured FAQ asset (1) | 10-15 detailed Q&A pairs with FAQPage schema | Capture long-tail conversational queries |

| Week 4 | Expert roundup or analysis (1) | Named expert perspectives on a trending topic with quoted insights | Build authority through association and E-E-A-T signals |

Month 2 schema focus: FAQPage, HowTo, and QAPage schema across all citation assets.

Month 3: Authority Content

Objective: Establish thought leadership and generate original research that AI systems treat as primary source material.

| Week | Content Type | Description | AI Visibility Goal |

|---|---|---|---|

| Week 1 | Original research report (1) | Survey or data analysis with proprietary findings, charts, and methodology | Become a primary source AI cites when referencing industry data |

| Week 2 | Thought leadership piece (1) | Forward-looking analysis with named author and clear point of view | Build brand entity authority across AI knowledge bases |

| Week 3 | Case study with metrics (1) | Customer success story with before/after data, timeline, and ROI | Provide AI with concrete outcome data for recommendation queries |

| Week 4 | Industry trend analysis (1) | Data-backed assessment of where the industry is headed, with predictions | Capture emerging query patterns before competitors |

Month 3 schema focus: Article schema with full author markup, Dataset schema for original research.

Ongoing: The Freshness Cadence

AI assistants prefer fresh content. Your editorial calendar needs a continuous update cycle:

- Monthly: Update all statistics and data points across citation assets with the most current figures available

- Quarterly: Refresh pillar pages and product pages with new features, pricing changes, and competitive updates

- Biannually: Conduct a full AI visibility audit to identify new citation gaps and emerging query patterns

- Continuously: Monitor AI-generated responses for your target queries and update content when competitors gain citation share

Content that is updated at least quarterly is cited 3x more often by AI assistants than content that hasn't been refreshed in 6+ months. Build update triggers into your editorial calendar, not afterthoughts.

Measuring Content Impact on AI Visibility

Traditional content metrics -- pageviews, bounce rate, time on page -- don't capture AI visibility performance. You need a measurement framework built for citation tracking.

Citation Tracking Metrics

These are the primary KPIs for an AI-focused content strategy:

| Metric | What It Measures | How to Track |

|---|---|---|

| Citation frequency | How often AI assistants cite your content across platforms | Run weekly prompt tests on ChatGPT, Perplexity, Gemini, and Copilot for your target queries |

| Citation share of voice | Your citation count vs. competitors for the same queries | Track your brand mentions vs. competitor mentions across 50-100 target prompts |

| Citation accuracy | Whether AI correctly represents your content when citing it | Review AI-generated mentions for factual accuracy and context fidelity |

| Citation surface area | How many distinct queries trigger a citation of your content | Expand prompt testing beyond core queries to long-tail and adjacent topics |

| Source attribution rate | How often AI links back to your domain (not just mentions your brand) | Track attributed links in Perplexity, Google AI Overviews, and Copilot responses |

AI-Referred Traffic

When AI assistants cite your content with a link, the traffic that arrives behaves differently from organic search traffic:

AI-referred traffic converts at 14.2% compared to 2.8% for standard Google organic traffic -- a 5x higher conversion rate. This is because AI-referred visitors arrive with higher intent: they've already received context about your brand from a trusted AI assistant and are clicking through for a specific reason.

How to identify AI-referred traffic in your analytics:

- Perplexity referrals: Appear as referral traffic from

perplexity.aiin Google Analytics - ChatGPT referrals: Appear as referral traffic from

chatgpt.comor direct traffic (users copying the URL) - Google AI Overview clicks: Currently grouped with organic Google traffic; isolate by filtering for queries where you appear in AI Overviews

- Copilot referrals: Appear as referral traffic from

bing.comwith specific query parameters

Before/After Visibility Scores

Use a standardized visibility scoring system to measure your AI content strategy's impact over time. For a comprehensive guide to running this assessment, see our complete AI visibility guide.

Visibility score methodology:

- Define 50-100 target prompts that your ideal buyer would ask AI assistants

- Test each prompt across ChatGPT, Perplexity, Gemini, and Google AI Overviews

- Score each response: 0 = not mentioned, 1 = mentioned but not linked, 2 = cited with link, 3 = primary recommended source

- Calculate your total score and track it monthly

| Timeframe | Typical Visibility Score (out of 300) | What's Happening |

|---|---|---|

| Baseline (before AEO) | 15-30 | Occasional, incidental mentions |

| After Month 1 (Foundation) | 40-65 | Core definitions and product pages begin appearing |

| After Month 2 (Citation Assets) | 80-130 | Data-rich assets earn citations for specific queries |

| After Month 3 (Authority) | 130-200 | Original research and thought leadership compound |

| Month 6+ (Ongoing) | 200-270 | Consistent freshness and expanding coverage drive sustained visibility |

Frequently Asked Questions

How long does it take for new content to get cited by AI assistants like ChatGPT?

New content typically begins appearing in AI citations within 4-8 weeks of publication, assuming it meets the three pillars of expertise density, structural clarity, and freshness. Perplexity, which searches the live web, can surface your content within days. ChatGPT and Gemini rely on training data and retrieval-augmented generation (RAG) systems, so the timeline depends on when they index or re-crawl your pages. Accelerating this process requires strong technical SEO foundations, proper schema markup, and placement on pages that AI crawlers already visit frequently.

What's the difference between AEO and traditional content marketing?

Traditional content marketing focuses on attracting human readers through search engines, social media, and distribution channels. The goal is traffic, engagement, and conversion. Answer Engine Optimization (AEO) focuses on making your content the source that AI assistants cite in their responses. The goal is citation presence, brand visibility within AI answers, and AI-referred traffic. The two approaches are complementary -- AEO-optimized content still ranks in traditional search, but it also performs in the AI layer that increasingly sits above organic results.

How many citation assets do I need to build meaningful AI visibility?

For most B2B SaaS companies, a foundation of 8-12 citation assets covering your core topic cluster is enough to begin earning consistent AI citations. This typically includes 1-2 pillar pages, 3-4 data-driven citation assets, 2-3 structured FAQ pages, and 1-2 original research pieces. The key is depth over volume. Five well-structured, data-dense citation assets will outperform fifty thin blog posts in AI visibility. After the initial foundation, plan to add 2-4 new citation assets per month and update existing ones quarterly.

Can I retrofit my existing blog content for AI citations, or do I need to start from scratch?

You can retrofit most existing content. The transformation process involves: (1) adding a direct answer block to the top of each post, (2) increasing data density by replacing vague claims with specific statistics, (3) restructuring prose into tables, lists, and Q&A formats, (4) adding schema markup (FAQPage, HowTo, or Article), and (5) updating publication dates and adding freshness signals. In our experience, about 60% of existing B2B blog content can be transformed into effective citation assets with targeted restructuring. The remaining 40% is typically too thin or too generic to salvage and should be replaced with purpose-built citation assets.

How do I measure ROI on an AI content strategy?

Measure ROI across three dimensions. First, citation metrics: track how often and where AI assistants cite your brand (use weekly prompt testing across ChatGPT, Perplexity, Gemini, and AI Overviews). Second, AI-referred traffic and conversions: segment traffic from AI referral sources in your analytics platform and track conversion rates (AI-referred traffic converts at 14.2% vs. 2.8% for organic, making it 5x more valuable per visit). Third, pipeline influence: track how many qualified leads first encountered your brand through an AI citation before entering your funnel. Companies running mature AEO programs report that AI-referred leads close at 22% higher rates than leads from other inbound channels because the AI citation provides pre-qualification and trust transfer.

Take Action: Build Your AI Content Strategy

Your competitors are still writing for Google. That's your window.

The brands that build AI-cited content now -- while most content teams are still focused on keyword rankings and backlink counts -- will own the citation layer that increasingly determines which brands buyers trust and choose.

Start with these three steps:

- Run an AI visibility audit to see where your brand currently appears (and doesn't) across ChatGPT, Perplexity, Gemini, and Google AI Overviews. Use our step-by-step audit guide to do it yourself, or request a free audit from our team.

- Transform your top 5 pages into citation assets using the three-pillar framework (expertise density, structural clarity, freshness) and the before/after examples in this guide.

- Launch the AEO editorial calendar to systematically build your citation presence over the next 90 days.

Content creation is Stage W (Write) of The ANSWER Framework — our full 6-stage AEO methodology. Content alone won't get you cited without the entity optimization and community consensus stages. See how we applied the full framework to our own site for context on how content fits into the bigger picture.

The content framework described here is exactly what our team builds for B2B SaaS companies through our AEO and GEO services and content services. If you want to see what AI assistants currently say about your brand -- and what it would take to change the answer -- let our team audit your content and build your AI visibility roadmap.

AI-referred traffic converts at 14.2% vs. 2.8% for Google organic. Every day your content isn't citation-ready is revenue left on the table.

Get AEO Insights Weekly

Join 500+ B2B marketers getting AI visibility tactics every Tuesday.

Ready to Get Your Brand Cited by AI?

See how your competitors show up in ChatGPT, Perplexity, and Gemini — and what it would take to get recommended.