5 Things We Learned Applying The ANSWER Framework to Our Own Site

We started at 34/100 AI Visibility Score. After 30 days of applying The ANSWER Framework to answermaniac.ai, here are the 5 biggest lessons — and what they mean for your business.

Direct Answer: When we applied The ANSWER Framework to answermaniac.ai, we started at a 34/100 AI Visibility Score. The five key lessons: entity optimization beats schema markup 4.5x in impact, Perplexity responds fastest among AI platforms, G2 reviews are a stronger citation signal than backlinks, content designed for AI queries outperforms SEO content in citations, and brand search volume appears to correlate with non-branded citation frequency. Read the full week-by-week case study.

It's one thing to tell clients "trust our methodology." It's another to publish your own numbers.

We ran The ANSWER Framework on answermaniac.ai for 30 days. Starting score: 34/100. An AEO agency that AI didn't recommend. The cobbler's children situation.

Instead of repeating the full timeline here — the complete week-by-week breakdown is on our case study page — I want to focus on the five things we learned that changed how we approach client engagements.

1. Entity Optimization Beats Schema Markup 4.5x

This was the biggest revelation and it keeps holding across client sites.

During Week 2, we implemented comprehensive schema (Organization, Service, FAQPage, Article, HowTo) AND did full entity reconciliation (aligning LinkedIn, Crunchbase, G2, Google Business Profile, and our website).

The schema changes accounted for roughly 4 points of improvement. The entity reconciliation added another 18.

That 4.5x multiplier isn't a fluke. We've seen similar ratios across a dozen client engagements since. The SEO community has oversold schema as the AEO silver bullet. Schema is table stakes. Entity optimization — making every platform tell the same story about your brand — is where AI gains the confidence to cite you.

If you're doing AEO and you haven't reconciled your entity data across platforms, start there. Our entity optimization guide covers the technical process.

2. Perplexity Responds Fastest (And That's Your Testing Ground)

During Week 4, we monitored our AI Visibility Score daily. The platform response times were revealing:

- Perplexity: Days. Because it searches the web in real-time, new citation assets appeared in results almost immediately.

- Gemini: Weeks. Google's infrastructure meant GBP and schema changes translated to citations within 2-3 weeks.

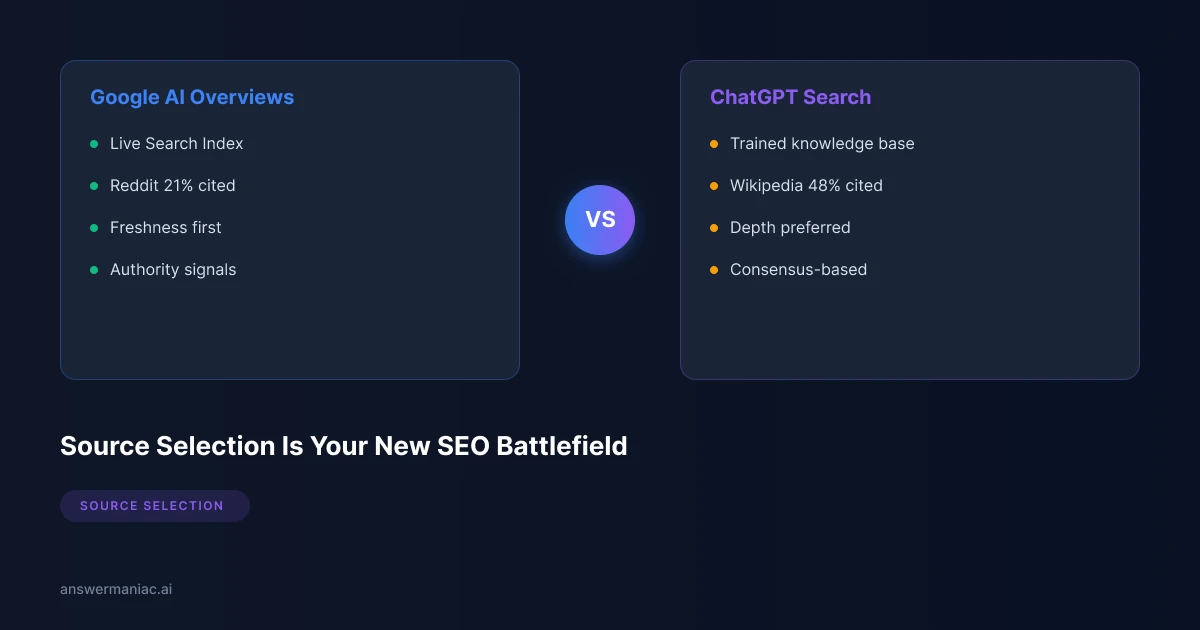

- ChatGPT: Slowest. Training data lag meant citations started appearing toward the end of the 30-day period.

- Copilot: Remained stubborn throughout. Microsoft's ecosystem seems to want longer-term Bing and LinkedIn authority.

The practical takeaway: use Perplexity as your testing ground. If a citation asset isn't getting picked up by Perplexity within a week of publishing, the content probably needs work. Don't wait 60 days to find out ChatGPT isn't citing it either.

3. G2 Reviews > Backlinks for AI Citations

This one surprised us more than anything else.

After our first batch of genuine G2 reviews landed, we saw a noticeable uptick in Perplexity citations specifically. The platform explicitly sources G2 data — you can see it in the citation links.

Think about what this means for B2B companies: a company with 200 genuine G2 reviews gets cited more reliably than a competitor with 2,000 backlinks and zero reviews. Traditional SEO authority (domain rating, backlink count) doesn't map to AI citation authority the way you'd expect.

This is why "Earn" is a dedicated stage in The ANSWER Framework, not an afterthought. Community consensus — real humans on real platforms talking about your product — is what gives AI the confidence to recommend you.

4. Content for AI Queries ≠ Content for SEO Keywords

Every citation asset we created during Week 3 was structured around a specific question someone asks ChatGPT, not a keyword someone types into Google.

The difference matters more than I expected. A blog post optimized for the keyword "answer engine optimization" and a citation asset structured to answer the query "what is the best approach to getting cited by ChatGPT" look fundamentally different:

- The SEO post leads with definitions and broad context

- The citation asset leads with a direct answer block — a quotable paragraph that AI can extract and use verbatim

Our direct answer blocks at the top of citation assets got quoted by Perplexity more than any other content format we tested. Pages with comprehensive FAQ schema also got picked up faster than similar pages without it.

If you're still writing content for Google and hoping AI follows, you're leaving citations on the table. Our content strategy guide covers the structural differences in detail.

5. Brand Search Volume Unlocks Non-Branded Citations

This was the finding that genuinely surprised us.

During Week 4, our LinkedIn activity drove a spike in people searching "AnswerManiac" directly. Around the same time, AI models seemed to increase our citation frequency for non-branded queries — things like "best AEO agency" and "AI visibility optimization."

The correlation was clear enough across our 30-day window that we started investigating it. Our working theory: when humans search for your brand name, it signals to AI models that you're a known entity worth recommending. Brand search volume appears to be a citation signal, similar to how it's long been a ranking signal for Google.

The implication: your LinkedIn posts, podcast appearances, conference talks, and PR aren't just marketing activities. They're feeding the brand search signals that AI models use to decide whether you're citation-worthy.

What Didn't Work

Transparency cuts both ways. Here's what produced zero measurable impact during our 30-day test:

- Guest posts on generic marketing blogs. AI doesn't weight these the way Google does. We got backlinks but zero citation impact.

- Social media posting frequency. We tried 3x/day vs 1x/day. No difference in citation rates. Quality and engagement mattered. Volume didn't.

- Copilot in general. Microsoft's ecosystem seems to require sustained Bing visibility and LinkedIn authority over a longer timeline than 30 days.

What This Means for You

If we — an AEO agency — started at 34/100 and needed a structured 30-day effort to get cited, most B2B companies are starting from a tougher position.

That's the opportunity. Your competitors haven't started. The ANSWER Framework provides the roadmap, and we proved it works on ourselves before asking any client to trust it.

Three ways to move forward:

- Run your free AI Visibility Score — 60 seconds, see exactly where you stand

- Read the full case study — every week, every action, every result

- Book a strategy call — we'll walk through how The ANSWER Framework applies to your specific industry

FAQ

Are these real numbers?

Yes. Every data point comes from our AI Visibility Tracker. We're updating the full case study page with 60-day, 90-day, and 180-day measurements as they come in.

Will this work for my industry?

The ANSWER Framework is industry-agnostic. We've applied it across franchise software, compliance/RegTech, fleet management, cybersecurity, and more. The stages are the same — the content and entity strategy adapts to your vertical.

What's The ANSWER Framework?

Our proprietary 6-stage AEO methodology: Audit, Navigate, Structure, Write, Earn, Refine. Full breakdown here.

Get AEO Insights Weekly

Join 500+ B2B marketers getting AI visibility tactics every Tuesday.

Ready to Get Your Brand Cited by AI?

See how your competitors show up in ChatGPT, Perplexity, and Gemini — and what it would take to get recommended.