AI Citation Analytics: How to Measure Your Brand's AI Presence in 2026

Track AI mentions and measure your brand's visibility across major AI platforms efficiently and accurately.

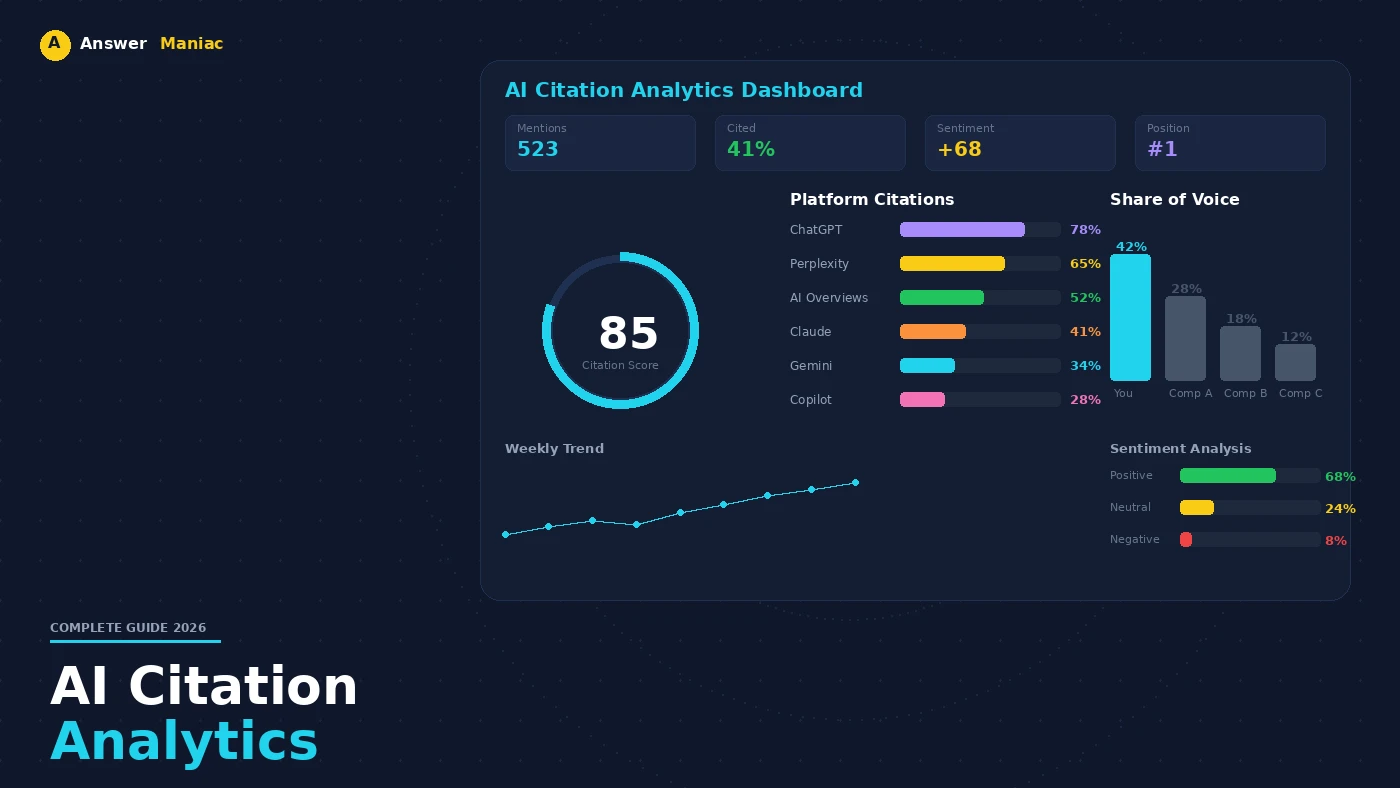

Direct Answer: AI citation analytics measures how often and prominently AI systems reference your brand across ChatGPT, Gemini, Claude, Perplexity, and Copilot. Core metrics include citation frequency, share of voice, citation quality, sentiment, and coverage. Track these by running structured prompts weekly, using platforms like Siftly or Ahrefs AI Visibility, and benchmarking against 3-5 direct competitors. This goes beyond traditional SEO — it captures influence in AI-generated answers where zero-click decisions happen.

Get a free AI visibility audit to see where your brand stands

AI citation analytics measures how often and prominently AI systems reference a brand, showing real visibility and authority in AI-generated responses. By tracking mentions across ChatGPT, Gemini, Claude, and Perplexity, brands gain actionable insights for strategy and content optimization.

This approach goes beyond traditional SEO, revealing AI-specific influence in emerging digital ecosystems. Understanding these patterns helps shape content that aligns with conversational queries and improves measurable brand exposure. Keep reading to learn how to track, benchmark, and optimize your AI presence effectively.

Key Takeaways

- AI citation analytics quantifies your brand's visibility and authority in AI-generated content across multiple LLM platforms.

- Core metrics like citation frequency, share of voice, sentiment, and coverage reveal trends and optimization opportunities.

- Automated platforms and structured content strategies enhance AI recognition, enabling measurable gains in traffic and brand authority.

What Is AI Citation Analytics And Why Does It Matter For Brands?

Think of AI citation analytics as tracking your brand's mentions inside chatbots like ChatGPT or Claude. It's not just a count. It's understanding how often you show up, in what context, and whether the AI treats you as a trusted source or a footnote.

This shift represents a fundamental change in how digital presence is quantified. As noted in research on Large Language Model Optimization:

"Visibility now hinges on being cited, referenced, or summarized by AI systems... LLMO (Large Language Model Optimization) is more than a conceptual shift; it is an emerging set of practices aimed at improving how a brand is represented, if at all, within the outputs of large language models. Each prompt's output is logged and categorized by: Presence (Is the brand mentioned?), Attribution (Is the source identifiable?), and Position (How prominently does the brand appear relative to others?)" - The LLMO White Paper[1]

Here's the breakdown in simple terms:

- The Core Idea: It measures your brand's visibility and authority within AI-generated answers. This is what AI visibility tracking quantifies at scale across platforms.

- Why It Matters: If AI doesn't cite you for relevant topics, you're invisible to a growing number of people who use chatbots to find answers and recommendations.

- What Gets Tracked: How often your brand is mentioned, context, perceived authority, and tone of the reference.

Ignoring this is a risk. Brands monitoring these citations early can shape how AI presents them, ensuring they stay relevant. For the methodology behind building this presence systematically, The ANSWER Framework lays out the full Audit-Navigate-Structure-Write-Earn-Refine process.

Which Core Metrics Accurately Measure AI Brand Presence?

To measure how AI "sees" your brand, you need visibility tracking that goes beyond simple mention counts, showing authority and reach across multiple platforms. The right metrics tell you about your visibility, authority, and reach within AI-generated answers.

Here are the core ones that matter:

- Citation Frequency: The raw count of how often AI models like ChatGPT mention your brand. It's your baseline volume. Tracking the rate of change over time is what we call citation velocity.

- Share of Voice: How your mention volume stacks up against key competitors in AI responses.

- Citation Quality: This checks if the AI cites you as a definitive authority or just a passing example. Quality beats quantity.

- Sentiment: Is the AI's tone when mentioning you positive, neutral, or negative? It reflects perceived brand tone.

- Coverage & Impressions: An estimate of how many users might see those AI answers, based on query volume. This gauges potential reach.

| Metric | What it Measures | Output |

|---|---|---|

| Citation frequency | Mention count | Volume trend |

| Share of voice | % vs competitors | Positioning |

| Citation quality | Authority level | Trust signal |

| Sentiment | Positive/neutral/negative | Brand tone |

| Coverage & impressions | Exposure estimate | Reach |

Tools from platforms like Ahrefs or Siftly can help visualize these trends in a dashboard, turning raw data into a strategy for improving AI visibility. For a deeper comparison of how this differs from traditional search metrics, see our breakdown of AI visibility vs SEO.

How Do You Collect AI Citation Data Across Major LLM Platforms?

Gathering AI citation data requires structured, repeatable processes. We start by defining consistent prompts that reflect customer-relevant queries, rather than relying on generic keywords. This approach ensures relevance and actionable insights.

A structured workflow improves accuracy. Here's how to proceed:

- Select 15-25 queries that align with user intent and target topics.

- Run these queries across major AI platforms using a ChatGPT citation tracker and extend to Gemini, Claude, and Perplexity.

- Log every appearance of your brand, noting position, context, and sentiment.

- Track competitor mentions for benchmarking and comparative analysis.

- Use automated tools like Siftly or Ahrefs AI Visibility to consolidate and visualize data trends.

We maintain regular monitoring schedules to capture fluctuations and prevent "citation cliffs," which occur when previously recognized content suddenly drops in AI mentions. This is particularly common on platforms like Perplexity, where source freshness matters.

This structured approach provides both qualitative and quantitative insights. It allows you to determine not only if your brand is mentioned but also how it is perceived, helping guide content creation and entity recognition strategies.

Which Tools And Platforms Support AI Citation Analytics?

You need the right tools to track your brand's mentions across AI platforms efficiently. Manual checking isn't scalable. Dedicated platforms automate the process, giving you dashboards and trend reports.

Here are several core platforms, each with a slightly different focus:

- Siftly: One of the strongest tools for Generative Engine Optimization (GEO) and citations, it helps calculate your share of voice against competitors.

- Ahrefs AI Visibility: Tracks mention frequency and estimated impressions. Good for seeing volume trends over time across different AI models.

- Hashmeta.ai: Analyzes citation types and authority signals. It helps you understand if the AI presents your brand as a leading source or just a reference.

- Search Engine Land Toolkit: Useful for tracking sentiment and prompt performance, often providing clear dashboards for at-a-glance monitoring.

| Platform | Primary Focus | Key Output |

|---|---|---|

| Siftly | GEO, citations | Share of voice |

| Ahrefs AI Visibility | Mentions, impressions | Trend analysis |

| Hashmeta.ai | Citation types | Authority signals |

| Search Engine Land Toolkit | Sentiment, prompts | Dashboards |

Using these tools, you can automate query runs, spot sudden drops in mentions (citation cliffs), and integrate data into reports. For the full stack of tools we recommend, see our answer engine optimization tools guide.

How Do You Benchmark AI Visibility Against Competitors?

https://www.youtube.com/watch?v=Y-0XnD04kjQ Credits: Search Engine Land

Benchmarking your AI visibility means seeing how you stack up against competitors inside chatbots. It's the only way to know if you're leading the conversation or falling behind.

Start by picking 3-5 direct competitors. Then, take the same set of prompts, questions your customers might actually ask, and run them through AIs like ChatGPT, Gemini, and Claude.

Here's what to track for each brand:

- Mention Frequency: Who gets cited the most?

- Positioning: Is a competitor cited as the "top" or "best" solution?

- Sentiment: Is the tone around them more positive?

- Share of Voice: Your mentions vs. theirs, shown as a percentage.

This comparison shows you exactly where you stand. You might find a competitor dominates answers about "sustainable packaging" or "budget options." That's a clear signal: their content is better aligned with what the AI (and users) are looking for.

Use those gaps to adjust. If they own a topic, analyze the type of content they publish that the AI is referencing. Then, refine your own content to better answer those specific queries. Our guide on competitor displacement strategies breaks down the exact playbook for taking share of voice from competitors who currently outrank you in AI responses.

Doing this regularly turns benchmarking from a snapshot into a strategy, ensuring your brand holds its ground in AI-generated answers.

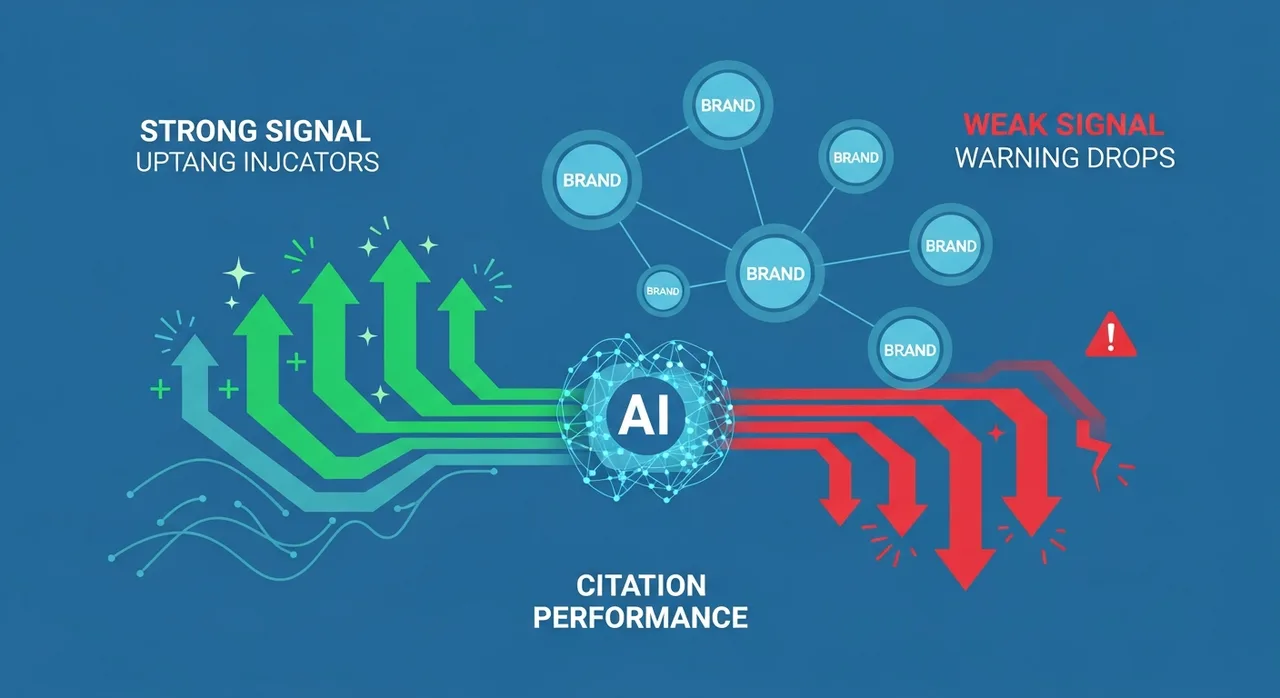

What Signals Indicate Strong Or Weak AI Citation Performance?

Modern AI systems prioritize "Semantic Authority" over simple keyword matches. The strength of these signals is now the primary driver of search visibility:

"In 2026 and beyond, brand mentions are not optional, they are essential components of a competitive AI search visibility strategy. Advanced AI search looks for 'Contextual co-occurrence': How often your brand appears with key topic phrases... Systematic building of mentions help LLMs recognize your brand as a trusted, topic-relevant source, especially when your content serves as a preferred reference point through original research and proprietary data." - ResearchGate[2]

Strong signals show you're a key source:

- High, steady mention frequency across different AIs.

- Positive or authoritative language used about your brand.

- Definitive citations where you're presented as the primary answer, not just one of many.

Weak signals that should trigger action:

- Inconsistent mentions that appear in one AI but not others.

- Neutral or vague language where competitors get more specific praise.

- Citation cliffs where your mentions suddenly drop after a period of steady presence.

Spotting a citation cliff, common on platforms like Perplexity, is a major warning. It often means your content has become less relevant to the AI's current knowledge. Catching these weak signals early lets you update your strategy before your visibility fades. The technical foundation for maintaining these signals starts with proper schema markup and entity optimization.

FAQ

How can I track my brand AI presence effectively across multiple platforms?

You can track your brand AI presence by monitoring LLM citations and AI answer share across multiple platforms. Using AI visibility metrics, citation frequency tracking, and AI dashboard analytics helps you understand where your brand appears. Prompt tracking tools and monitoring AI response positioning ensure you identify trends, citation cliffs, and exposure patterns. This data supports stronger GEO strategies and improved content optimization.

What are the best ways to measure AI citation quality score and sentiment?

Measuring AI citation quality score involves evaluating the authority and relevance of your brand mentions in AI-generated responses. Combining this with sentiment analysis in AI responses helps assess public perception. Using AI crawler optimization and traffic analysis tools, along with citation context AI, allows you to identify high-value mentions. This approach informs generative engine optimization and improves your overall brand presence in AI platforms.

How does citation frequency tracking influence AI search visibility?

Citation frequency tracking shows how often your brand is mentioned in AI-generated answers, which directly affects AI search visibility. Tracking share of voice AI, AI platform coverage, and answer share gains identifies content gaps and opportunities. Integrating these insights with prompt-level KPIs and monitoring AI response positioning improves content strategy. This ensures your brand maintains strong visibility, consistent exposure, and better performance across multiple AI platforms.

How can AI dashboard analytics improve brand exposure metrics?

AI dashboard analytics centralizes information about LLM citations, AI answer share, and query volume impressions. It allows monitoring of multi-LLM coverage and citation patterns across platforms. Using real-time dashboards and enterprise AI guardrails helps track content freshness, AI content velocity, and answer share gains. These analytics provide actionable insights to optimize brand exposure metrics, visibility score trends, and overall performance in AI-generated responses.

What strategies enhance generative engine optimization and GEO content pipeline?

To enhance generative engine optimization, maintain a structured GEO content pipeline that tracks AI citations and brand mentions. Monitoring AI response positioning, citation cliffs, and content velocity ensures timely updates. Using prompt-level KPIs, custom prompt setup, and multi-LLM coverage strengthens AI visibility. Continuous competitive AI benchmarking and analysis of answer share gains improve brand presence, visibility trends, and performance across AI dashboards. Our AI citation playbook covers the 23 content types that get cited most.

AI Citation Analytics: Measuring Our Brand Presence Effectively

Tracking your brand in AI is about becoming the recommended source. The insights from your analytics, citation frequency, share of voice, sentiment, all guide one action: optimizing to be the answer AI trusts.

The brands that win in AI search aren't the ones with the most content. They're the ones with the most structured, authoritative, and frequently cited content. AI citation analytics gives you the data to close the gap between where you are and where you need to be.

Explore AnswerManiac to optimize your AI brand presence, or get a free AI Visibility Audit to see where you stand today.

References

- The LLMO White Paper: Optimizing Brand Discoverability in Models Like ChatGPT, Claude - Medium

- Leveraging Brand Mentions to Enhance LLM and SEO Visibility - ResearchGate

Related Articles

Get AEO Insights Weekly

Join 500+ B2B marketers getting AI visibility tactics every Tuesday.

Ready to Get Your Brand Cited by AI?

See how your competitors show up in ChatGPT, Perplexity, and Gemini — and what it would take to get recommended.